AI vs Human Accessibility Testing

The conversation around web accessibility has grown louder, and with it, the debate over the best way to test for it. On one side, you have artificial intelligence; fast, scalable, and always learning. On the other, you have human testers, who bring nuance, context, and real-world experience. In 2025, businesses are constantly asking: which method finds more issues?

The answer isn’t a simple numbers game. While AI-powered tools can scan thousands of pages in minutes, they don’t see a website the way a person does. A human tester understands frustration, confusion, and the difference between a compliant website and a truly usable one. This article breaks down the strengths and weaknesses of both AI and human accessibility testing. We’ll look at what each method is good at finding, where they fall short, and how to build a testing strategy that gives you the best return on your investment.

The Current State of AI in Accessibility Testing

AI has become a common first step in accessibility testing for many organizations. Automated tools, often powered by machine learning, can be integrated directly into the development process. They act as a first line of defense, scanning code for violations of the Web Content Accessibility Guidelines (WCAG). These tools are incredibly fast and can run checks with every new code commit, giving developers immediate feedback.

This speed and efficiency are the main reasons for their popularity. They can process a massive volume of pages, flagging technical errors that might otherwise be missed. However, relying solely on AI creates a dangerously incomplete picture of your website’s accessibility.

What a 57% Detection Rate Really Means

You may have seen the statistic that automated tools find around 57% of accessibility issues. While that number sounds decent, it’s misleading without context. This figure generally refers to the portion of WCAG success criteria that can be tested by a machine without ambiguity. It doesn’t mean the AI finds 57% of the actual barriers a user with a disability will face.

The issues AI can detect are often technical and binary. For example, an AI can check if an image has an alt attribute. It cannot, however, determine if that alt text is meaningful or just a string of keywords. It can see if a button has an accessible name, but it can’t tell you if the purpose of that button is clear to someone using a screen reader. The 57% represents a portion of the total potential issues, not the reality of a user’s experience.

Types of Issues AI Catches Best

AI excels at identifying clear-cut technical problems that violate WCAG rules. These are issues that can be confirmed by looking at the code alone.

Some of the problems AI is good at catching include:

- Missing Alt Text: Checking if an <img> tag has an alt attribute.

- Contrast Ratios: Measuring the color contrast between text and its background to ensure it meets minimum requirements.

- Empty Links and Buttons: Finding <a> or <button> elements that have no text or accessible name.

- Missing Form Labels: Detecting <input> fields that aren’t properly associated with a <label>.

- Incorrect Heading Structure: Flagging instances where heading levels are skipped (e.g., an <h1> followed by an <h3>).

- Absence of a Language Attribute: Verifying that the <html> tag has a lang attribute to inform screen readers of the page’s language.

These automated checks are valuable for maintaining a baseline of technical compliance and catching regressions early.

Where Human Testing Still Wins

Despite advancements in AI, human testing remains irreplaceable for achieving true accessibility. A human tester, especially one who uses assistive technology, doesn’t just check for compliance; they evaluate usability. They bring an understanding of human behavior, context, and logic that machines simply cannot replicate. While an AI can tell you if your code is compliant, a person can tell you if your website is usable.

This difference is critical. A site can be 100% compliant with automated checks and still be impossible for a person with a disability to use. Humans catch the show-stopping barriers that AI overlooks.

Context and User Experience Issues

Context is everything in web accessibility. An AI tool might verify that every image has alt text, but it takes a human to know if the description is actually helpful. Imagine an e-commerce site where every product image has the alt text “image.” The site would pass an automated check, but it would be useless for a blind shopper.

Human testers evaluate the entire user journey. They can answer questions like:

- Is the navigation flow logical?

- Can a user easily find the information they need?

- Is the language on the page clear and easy to understand?

- Does the site’s functionality make sense, or is it confusing?

These are experience-based questions that fall outside the scope of code-based analysis. A human can spot a confusing checkout process or a poorly worded error message that could stop a user from completing a task, even if no specific WCAG rule is broken.

Complex Interactive Elements

Modern websites are full of dynamic and interactive components that present major challenges for AI testers. Elements like custom widgets, single-page applications, and complex forms often have states and behaviors that automated tools can’t fully process.

A human tester can interact with these elements as a real user would. They can test things like:

- Dynamic Menus: Does the dropdown menu trap the keyboard focus? Can a screen reader user navigate it properly?

- Pop-up Modals: When a modal window appears, is the focus correctly moved into it? Can the user easily close it using a keyboard?

- Custom Forms: Are error messages announced clearly by screen readers when a user makes a mistake? Is the tabbing order logical through a multi-step form?

AI struggles with these scenarios because it can’t always trigger the different states of an element or understand the intended interactive flow. A human tester can walk through these complex paths, uncover hidden bugs, and confirm that the experience is smooth, not just technically compliant.

Cognitive Accessibility Barriers

Cognitive accessibility is one of the most important and overlooked areas of digital inclusion, and it’s almost impossible for AI to test effectively. Disabilities affect how people process information, solve problems, and perceive the world around them. Barriers are rarely about code; they’re about design and content.

A human tester is essential for identifying these issues. They can assess:

- Clarity of Content: Is the writing simple and direct? Are complex ideas broken down into smaller, digestible chunks?

- Predictability of Design: Is the layout consistent across the site? Do interactive elements behave in an expected way?

- Distraction-Free Experience: Are there flashing ads, auto-playing videos, or other elements that could distract or overwhelm a user with attention-related conditions?

- Error Forgiveness: When a user makes a mistake, does the site provide clear instructions on how to fix it without making them feel stupid?

An AI cannot measure a user’s cognitive load or determine if a page layout is confusing. These assessments demand human judgment, empathy, and a deep understanding of how different people interact with digital content.

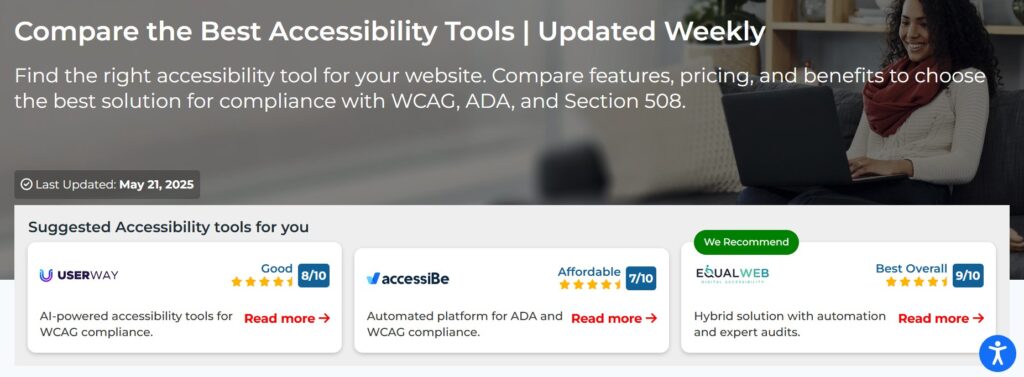

Comparing Popular AI Testing Tools

The market for AI-powered accessibility testing has expanded rapidly. It ranges from large language models (LLMs) that can “read” a page and offer suggestions to dedicated browser extensions that check code against WCAG standards. Each has its place, but none offers a complete solution. Understanding their differences is key to using them effectively as part of a broader testing strategy.

ChatGPT vs Claude vs Gemini for Accessibility

Large language models like ChatGPT, Claude, and Gemini are newcomers to the accessibility field. They offer a different kind of analysis from traditional automated tools. Instead of just checking code, they can interpret content and context to a limited degree. You can ask one of these models to review a page for accessibility, and it might provide suggestions on improving alt text, simplifying language, or making link text more descriptive.

However, their effectiveness is limited and can be unreliable.

- ChatGPT often provides good, general advice but can miss technical specifics and may “hallucinate” issues that don’t exist.

- Claude is often better at analyzing content and tone, which can be useful for spotting some cognitive barriers, but it also struggles with the technical side.

- Gemini can analyze both text and images, offering a more integrated review, but its findings are still high-level and lack the precision of dedicated tools.

The biggest risk with LLMs is their lack of sourcing. They can’t point to a specific line of code causing an issue, and their advice is often generic. They are best used as brainstorming partners, not as auditing tools.

Automated Tools: Axe, WAVE, and Lighthouse

Axe, WAVE, and Lighthouse are the workhorses of automated accessibility testing. They are browser extensions or developer tools that scan a page’s code and report specific WCAG violations. They are much more reliable than LLMs for technical checks.

- Axe: Developed by Deque, Axe is a highly respected rules engine that powers many other tools. It’s known for its low rate of false positives and clear remediation advice. It integrates well into automated development workflows, making it a favorite for teams practicing continuous integration.

- WAVE: Created by WebAIM, WAVE is an excellent educational tool. It visually annotates a live webpage, showing accessibility errors and alerts directly on the page. This visual feedback is very helpful for designers and content creators who may not be comfortable reading code-level reports.

- Lighthouse: Built into Google Chrome’s DevTools, Lighthouse provides an accessibility score as part of a broader performance audit. It’s convenient and easy to use, making it a great starting point for developers. However, its accessibility checks are not as exhaustive as those in Axe or WAVE.

These tools are essential for catching low-hanging fruit. They find the definite, clear-cut errors. But they all come with the same warning: they cannot test everything. They are one piece of the puzzle, not the whole solution.

Cost Analysis: AI vs Human Testing ROI

When deciding on a testing strategy, budget is always a factor. At first glance, AI testing seems far cheaper. Automated tools can be free or low-cost, and they run checks almost instantly. Human testing, on the other hand, requires paying skilled professionals for their time. But a simple cost comparison is short-sighted. The true return on investment (ROI) comes from looking at the total cost of finding and fixing bugs, as well as the cost of not finding them.

Time Investment Comparison

AI tools deliver results in seconds. A developer can run a Lighthouse or Axe scan and get a report almost immediately. This speed is perfect for quick checks during development. It allows teams to catch simple errors before they ever get merged into the main codebase.

Human testing is a much bigger time commitment. A manual audit of a website can take days or even weeks, depending on its size and complexity. Testers must meticulously go through every page, every interactive element, and every user flow with different assistive technologies.

However, the time saved by AI upfront can be lost on the backend. Automated reports are often filled with false positives or low-impact issues that take developer time to investigate and dismiss. A human audit, while slower, delivers a curated list of real, high-impact problems. This means developers spend their time fixing things that actually matter to users, leading to a more efficient use of resources in the long run.

Accuracy and False Positive Rates

Accuracy is where the value of human testing becomes clear. Automated tools are notorious for producing “false positives”; flagging things as errors when they aren’t. For example, a tool might flag a creatively designed button as having low contrast, even if it has a visible focus outline that makes it perfectly usable for keyboard users. Developers then have to spend time chasing down these non-issues.

Worse than false positives are the “false negatives”; the critical issues that AI completely misses. An AI tool will not detect that your checkout process is so confusing that screen reader users abandon their carts in frustration. It won’t find that your instructional video is unusable for someone with a vestibular disorder because of excessive motion. These are the kinds of barriers that lead to lost revenue and legal risk.

Human testers have a near-zero false positive rate. Their findings are based on actual user experience. The issues they report are real problems that affect real people. Investing in a human audit means you’re paying for accuracy and certainty, which can save a huge amount of wasted time and effort.

The Hybrid Approach: Best of Both Worlds

The debate over AI vs. human testing isn’t about picking a winner. The most mature and effective accessibility strategies don’t choose one over the other; they use both. A hybrid approach combines the speed and scale of automation with the depth and accuracy of human expertise. This allows you to catch the widest range of issues efficiently and create an experience that is both compliant and genuinely usable.

By integrating both methods into your workflow, you create a system of checks and balances. AI handles the high-volume, repetitive work, freeing up human testers to focus on the complex challenges where their skills are most needed.

When to Use AI Testing

AI testing is most valuable when used early and often throughout the development lifecycle. It’s a preventive measure, not a final audit.

Here are the ideal times to use automated tools:

- During Development: Integrate automated checks (like Axe) into your build process. This provides developers with instant feedback, allowing them to fix simple bugs on the spot before they become bigger problems.

- For Quick Scans: Use browser extensions like WAVE or Lighthouse for quick spot-checks on new components or pages. This is a great way for designers and content creators to get a quick sense of a page’s technical health.

- Monitoring for Regressions: Run automated scans regularly across your entire site to ensure that new code changes haven’t introduced new, basic accessibility errors.

Think of AI as your smoke detector. It’s great at warning you of common dangers, but it’s not a substitute for a full safety inspection.

When Human Expertise is Essential

Human testing should be brought in at critical points in a project’s lifecycle to validate the user experience and catch what automation misses. It’s a diagnostic and validation step.

Human expertise is non-negotiable for:

- Design and Prototyping: Have accessibility experts review wireframes and designs before any code is written. This can prevent fundamental design flaws that are expensive to fix later.

- Auditing Key User Flows: Before a major launch, have human testers, including people with disabilities, go through critical paths like registration, checkout, or using a core feature.

- Testing Complex Interactions: Any time you have dynamic menus, complex forms, or custom-built widgets, you need a human to test their real-world usability with assistive technologies.

- Full Compliance Audits: For legal compliance (like meeting ADA or Section 508 standards), a comprehensive manual audit is required to produce a VPAT (Voluntary Product Accessibility Template) or to certify that the site meets WCAG standards.

Humans provide the context, empathy, and real-world validation that ensures your site is not just technically sound but truly welcoming to everyone.

Future of AI in Accessibility Testing

The role of AI in accessibility is only going to grow, but its focus is likely to shift. Instead of just being a code scanner, the next generation of AI will probably get better at understanding context and user behavior. We may see tools that can analyze entire user flows, predict potential points of confusion, and offer more nuanced recommendations for things like cognitive accessibility.

Machine learning models will continue to get better at identifying patterns associated with inaccessible design, potentially reducing the rate of false positives. AI might also play a bigger role in generating remediation advice, offering developers ready-to-use code snippets to fix common problems.

However, the need for human oversight will remain. As AI becomes more powerful, we’ll need human experts more than ever to validate its findings, interpret its suggestions, and test for the subtle, emotional, and psychological aspects of usability that will likely always be beyond a machine’s grasp. The future isn’t AI replacing humans; it’s AI empowering humans to do their jobs better.

Choosing the Right Testing Strategy for Your Business

There is no one-size-fits-all answer for accessibility testing. The right strategy for your business depends on your budget, your team’s skills, your product’s complexity, and your tolerance for legal risk. However, the most effective approach for almost any organization is a hybrid one.

Start by embedding automated testing into your development process. Use free tools like Axe, WAVE, and Lighthouse to build a strong foundation of technical compliance. This will catch a significant number of bugs early, saving you time and money.

Then, strategically invest in human testing. You don’t necessarily need a full-time team. You can hire an accessibility consulting firm for periodic manual audits, especially before launching a new product or feature. Prioritize testing for your most critical user journeys; the paths that are most important to your business and your customers.

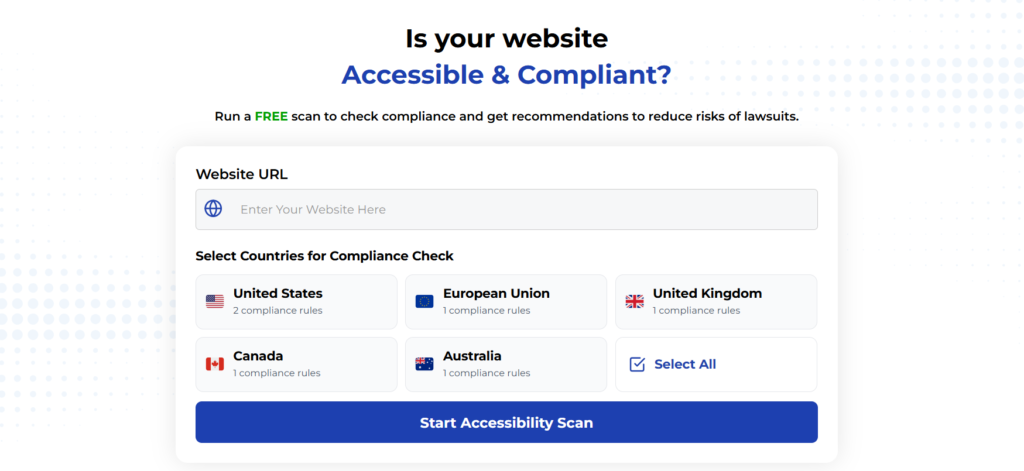

Using Automated Tools for Quick Insights (Accessibility-Test.org Scanner)

Automated testing tools provide a fast way to identify many common accessibility issues. They can quickly scan your website and point out problems that might be difficult for people with disabilities to overcome.

Visit Our Tools Comparison Page!

Run a FREE scan to check compliance and get recommendations to reduce risks of lawsuits

Final Thoughts

Most importantly, involve people with disabilities in your testing process. Their lived experience is the most valuable data you can get. Their feedback will move you beyond simple compliance and toward creating products that people genuinely love to use. Have you considered how accessible your digital products truly are?

Start your compliance journey, update your accessibility statement, and keep EAA top of mind in every project going forward.

Commit to accessibility testing today. The sooner you start, the sooner everyone can benefit from your content and services; no exceptions.

Run a Free Scan to Find E-commerce Accessibility Barriers

Want More Help?

Try our free website accessibility scanner to identify heading structure issues and other accessibility problems on your site. Our tool provides clear recommendations for fixes that can be implemented quickly.

Join our community of developers committed to accessibility. Share your experiences, ask questions, and learn from others who are working to make the web more accessible.