Advanced Testing Tools for Real-World WCAG Workflows

We’re rolling out a multi-part series that breaks down accessibility testing tools in plain language, with hands-on steps, realistic use cases, and straight talk on WCAG, ADA, and Section 508 compliance; no fluff, no filler. Each piece will show how teams can bake accessibility into design, content, QA, and CI/CD; so fixes stick and audits don’t become surprise fire drills. We’ll pull from platform docs, expert practice, and our own test runs to show what actually works and where tools fall short; because no single scanner catches everything and automation still needs human checks.

This announcement previews what’s coming and what to expect per tool family, including strengths, gaps, setup tips, and who benefits most (developers, designers, content authors, QA, or business owners). We’ll also include practical WCAG mapping, keyboard and screen reader checks, contrast testing, and reporting workflows that make a dent in backlog; not just in theory, but in day-to-day delivery.

Have you looked at your last Lighthouse score and wondered why real users still struggle with forms or modals? That’s exactly the gap this series will close; tool by tool, step by step.

What this series covers

- Honest breakdowns of browser extensions, developer tools, enterprise suites, widgets, and CI add-ons.

- How to combine automated checks with manual testing so teams don’t miss critical blockers.

- WCAG 2.1/2.2 coverage patterns per tool and where human judgment still matters (labels, focus, names, error help).

- CI/CD integrations that stop regressions before code merges.

- Realistic remediation tips that match how teams actually ship.

We’ll reference our comparison work and testing roundups so you can go deeper as we publish each long-form piece in the series.

Series lineup at a glance

Below you’ll find a short brief for each tool family we’ll cover, with a focus on how teams can use them right now and what we’ll unpack in the full write-ups.

1) WAVE by WebAIM: visual page checks for quick wins

What it is: A free browser extension that flags issues on a live page and overlays icons next to the exact elements in question.

Why it matters: Designers and content teams can see errors in context (missing alt text, contrast problems, heading levels) without digging through raw markup.

Where it shines:

- Fast page-by-page feedback during page builds.

- Great for learning patterns and spotting template issues early.

Limits:

Coming in the full article:

- A “10-minute sweep” checklist to run before sign-off.

- Common false assumptions and how to verify with keyboard and screen reader passes.

- Contrast fixes that avoid brand blowback.

We’ll link to our tools comparison piece for extra context as the article goes live.

2) Axe DevTools and axe-core: developer-first checks with low noise

What it is: A rules engine and set of extensions trusted by engineering teams; known for fewer false positives and strong remediation guidance.

Why it matters: Developers can block common violations during local dev and CI, reducing late-stage churn.

Where it shines:

- Clear rule breakdowns mapped to WCAG techniques.

- Strong CI usage with test frameworks and pipelines.

Limits:

Coming in the full article:

- Sample CI wiring and how to tune thresholds.

- Triage flow for repeated violations (focus trap, ARIA misuse).

- When to add manual checks (names, instructions, error recovery).

We’ll reference our automated testing comparison for deeper setup paths.

3) Lighthouse (Chrome DevTools): quick baseline, not a full audit

What it is: A built-in Chrome audit that covers performance, SEO, and accessibility with an overall score and a list of failed checks.

Why it matters: Teams can run a first pass fast and catch obvious misses on any page in seconds.

Where it shines:

Limits:

Coming in the full article:

- What the score hides and how to validate with other tools.

- A pre-merge ritual that pairs Lighthouse with axe and a short keyboard check.

- The right way to talk about Lighthouse scores in status updates.

We’ll connect to our tool comparisons for context on coverage differences.

4) Siteimprove and similar enterprise platforms: governance and scale

What it is: A platform for large sites that monitors accessibility across many pages, supports ownership, and tracks progress.

Why it matters: Enterprises need dashboards, roles, and trends; not just one-off page scans.

Where it shines:

Limits:

Coming in the full article:

- How to set rules that reflect WCAG 2.2 priorities.

- The right way to assign owners and SLAs.

- Pairing platform alerts with monthly keyboard and screen reader checks.

We’ll reference our free-vs-paid comparison for tradeoffs.

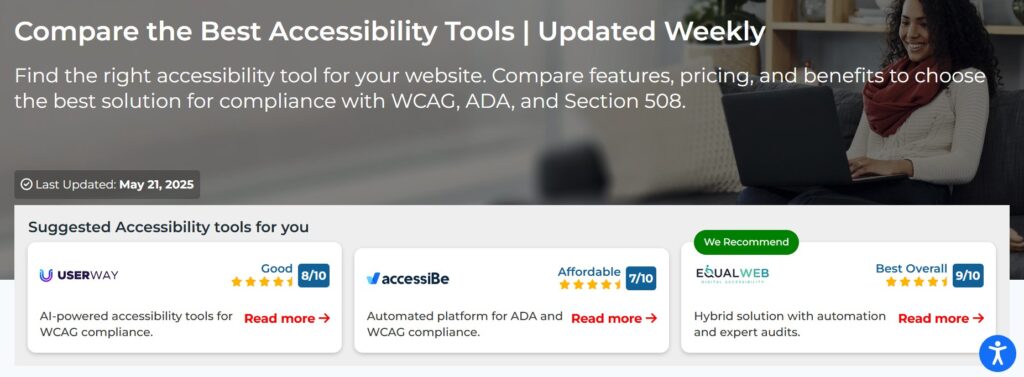

5) UserWay vs AccessiBe vs EqualWeb: widgets, automation, and tradeoffs

What they are: Widget-based and hybrid solutions that add overlays or AI fixes in real time; some pair automation with human audits.

Why they matter: Business owners often start here for faster setup and on-page controls (contrast, text size, focus tweaks).

Where they shine:

Limits:

- Overlays can’t fix template semantics, naming, or flow by themselves; human reviews still matter for true conformance.

Coming in the full article:

- When a widget helps, and when it creates false confidence.

- Hybrid plans that combine automation with expert audits and why that matters.

- How to document decisions for legal and procurement teams.

We’ll lean on our feature and pricing comparisons across these tools.

6) Pa11y and AccessLint: simple automation and GitHub-native reviews

What they are: Lightweight tools for command-line scans (Pa11y) and pull request feedback in GitHub (AccessLint).

Why they matter: Teams can catch common issues during review and keep regressions out of main branches.

Where they shine:

Limits:

Coming in the full article:

- A minimal CI recipe with Pa11y CI.

- Reducing comment noise in AccessLint.

- Teaching moments: how PR comments level-up team knowledge.

We’ll expand on findings from our automated testing comparison.

7) Microsoft Accessibility Insights, IBM Equal Access, and similar checkers

What they are: Free tools that add structured checks in the browser, with guided flows for manual checks and rule explanations.

Why they matter: They bridge the gap between raw automation and hands-on review by steering testers through key WCAG areas.

Where they shine:

Limits:

Coming in the full article:

- Using guided flows for form validation and error patterns.

- Pairing tool output with NVDA and VoiceOver smoke tests.

- How to log issues that align with WCAG success criteria.

We’ll point to W3C tool lists for selection tips and broader context.

8) Content readability tools: Grammarly, Hemingway, and plain language checks

What they are: Writing aids that help teams improve clarity, sentence length, and structure for cognitive and language accessibility.

Why they matter: Clean language and clear instructions reduce errors and abandonment, especially in forms and help content.

Where they shine:

Limits:

- They don’t understand context, intent, or task flow like a human reviewer with accessibility skills.

Coming in the full article:

- A content checklist for headings, labels, and error help that aligns with WCAG 2.1/2.2.

- Jargon triage: how to remove reading barriers without dumbing things down.

- Team rituals that keep reading levels in check across new pages.

We’ll build on our accessibility writing comparison for practical wins.

9) Social scheduling tools with accessibility features: Hootsuite vs Buffer

What they are: Scheduling platforms that now include better alt text support, keyboard handling, and workflows that reduce missed descriptions.

Why they matter: Social posts often carry images and video; adding alt text and captions needs to fit into the publishing process, not happen after the fact.

Where they shine:

Limits:

- Platform constraints vary; creators still need media guidelines to hit WCAG-style clarity and contrast for graphics.

Coming in the full article:

- An alt text checklist for brand teams.

- Contrast and text-in-image tips for social cards.

- Approval flows that stop silent posting of media without descriptions.

We’ll carry over insights from our feature comparison for these tools.

10) W3C tool lists and selection advice: choose tools that fit team reality

What it is: Curated W3C lists and guidance for selecting evaluation tools that match needs, platforms, and workflows.

Why it matters: Buying or adopting a tool without fit leads to shelfware and false confidence.

Where it shines:

Limits:

Coming in the full article:

- A short selection rubric teams can actually use.

- A 2-week pilot plan with success criteria.

- How to combine 2–3 tools for the right coverage.

How we’ll structure every in-depth piece

Each tool article in the series will include:

- Who should use it: devs, QA, designers, content, or owners, and at what stage (design, dev, pre-merge, production).

- Setup in under 20 minutes: clear steps and pitfalls to avoid.

- What to test first: top 10 checks per tool that map cleanly to WCAG success criteria.

- Where it breaks down: common false positives or blind spots and how to double-check with keyboard and screen reader passes.

- Fixing patterns: repeatable solutions for names, labels, focus, contrast, and error messages with before/after reasoning.

- Reporting: how to write issues that devs can fix, and how to avoid vague tickets.

- Proof for stakeholders: what to screenshot or export and how to talk about risk without over-promising compliance.

We’ll keep each section tight and easy to scan, with clear takeaways per role and a short checklist to use immediately on live work.

Why we combine tools instead of chasing a silver bullet

- Automation catches repeatable violations fast, but it can miss names, context, or task-level failures that block real users.

- Human testing finds the issues that hurt most; keyboard traps, broken focus order, unclear labels, weak error copy; even when automation says “all good”.

- Teams do best with a small, reliable stack: one strong developer checker, one visual page tool, one CI safety net, and scheduled manual checks with screen readers and keyboard passes.

We’ll show practical stacks for different team sizes and budgets in the series launch.

What to do before the series starts

- Run a baseline: pick 5 high-traffic pages and test with WAVE, axe, and a 5-minute keyboard pass that hits menus, forms, and dialogs.

- Log only what matters: focus on names, labels, landmarks, focus movement, contrast, and error help; avoid noisy “someday” tickets.

- Create a weekly 30‑minute slot for quick retests and one real-user journey, like signup or checkout.

These small rituals add up and make the later deep-dives more valuable because you’ll have real data to compare.

What we’ll release first

- WAVE and axe together: how to pair visual overlays with developer-grade rules for faster fixes.

- Automated testing in CI: Pa11y CI and AccessLint settings that catch regressions without drowning reviewers in comments.

- Lighthouse with limits: the right way to use the score and when to throw it out in favor of manual checks.

We’ll connect each article to our existing comparison pieces so readers can benchmark choices and set expectations with their teams.

FAQs we’ll address in the series

- Is a high Lighthouse score enough for legal risk? Short answer: no; add manual checks and assistive tech testing.

- Can a widget make a site compliant on its own? No; use overlays carefully and combine with structural fixes and human audits.

- How often should teams run full scans? Treat it as ongoing; monitor weekly and do deeper passes before big releases.

- What does “good enough” look like for small teams? A small stack, consistent manual checks, and a short, living remediation list.

We’ll give straight answers with repeatable steps, not slogans.

Want to stay ahead of the rollout? Start with the tools you already have: run WAVE on your top pages, add axe to your browser, and try a 5‑minute keyboard pass on your primary flow this week. If you manage releases, wire a simple Pa11y check in CI so you stop merging obvious misses. When the first article drops, you’ll have real findings to validate; and real wins to ship.

Our goal is simple: help teams fix what matters faster with tools they understand and workflows they can keep up with.

Stay tuned; first article lands soon.

What we’ll keep doing in every article

- Use clear headings and short sections with action-first language, so screen reader users and skimmers can get to the point quickly.

- Stick to simple English and avoid jargon that hides what needs fixing.

- Give steps teams can run the same day, not just theory.

- Show the limits, not just the highlights, and tell you when to add manual checks or real-user testing sessions.

If you care about WCAG, ADA, and Section 508; and you want results without burning out your team; this series is for you.

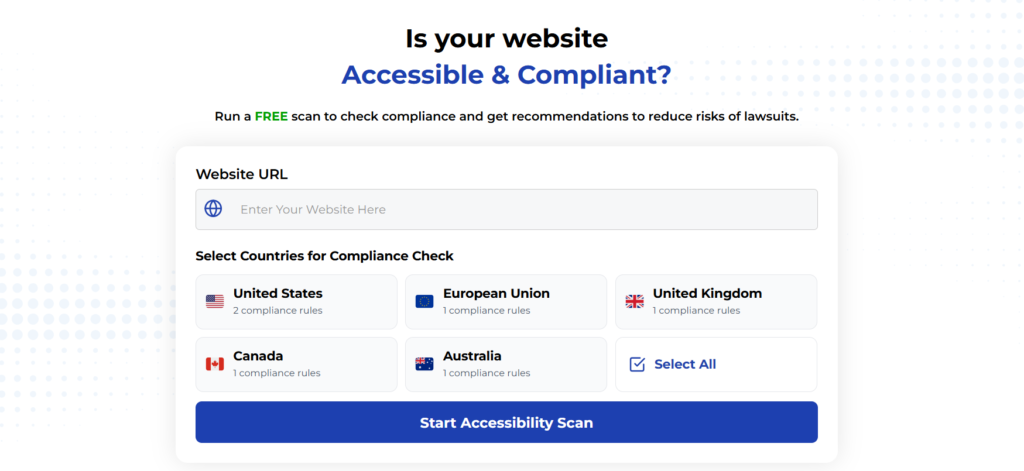

Using Automated Tools for Quick Insights (Accessibility-Test.org Scanner)

Automated testing tools provide a fast way to identify many common accessibility issues. They can quickly scan your website and point out problems that might be difficult for people with disabilities to overcome.

Visit Our Tools Comparison Page!

Run a FREE scan to check compliance and get recommendations to reduce risks of lawsuits

Final Thoughts

We believe good accessibility work belongs in everyday delivery, not just audits at the end. This series will help teams fold the right tools into real workflows and get steady wins that help users and reduce risk at the same time.

Start your compliance journey, update your accessibility statement, and keep EAA top of mind in every project going forward.

Run a Free Scan to Find E-commerce Accessibility Barriers

Want More Help?

Try our free website accessibility scanner to identify heading structure issues and other accessibility problems on your site. Our tool provides clear recommendations for fixes that can be implemented quickly.

Join our community of developers committed to accessibility. Share your experiences, ask questions, and learn from others who are working to make the web more accessible.