The Current State of AI Accessibility Testing: What 57% Really Means

When we talk about AI finding 57% of accessibility issues, it’s important to understand what’s being counted. This figure represents the portion of WCAG rules that are “machine-testable.” For example, an automated tool can easily verify if a page has a title, if headings are in a logical order (H1, H2, H3), or if form fields have associated labels. These are binary checks, the element is either present and correctly coded, or it’s not.

The improvement from older tools to modern, AI-powered ones is significant. AI is better at reducing “false positives,” meaning it’s less likely to flag an issue that isn’t really a problem. This saves developer time and builds trust in the tools. With growing legal pressure, like the European Accessibility Act’s 2025 deadline and the thousands of ADA-related lawsuits filed annually in the US, companies need reliable ways to check their sites quickly. AI-driven scanning meets that need by providing a fast first pass.

But the 43% (or more) that AI misses is where the most serious accessibility problems often hide. These are issues that aren’t about code rules, but about human experience. An AI can’t tell you if your alt text is actually helpful or just a jumble of keywords. It can’t navigate your website with a keyboard to see if users get stuck in a “keyboard trap.” And it certainly can’t tell you if the user journey is confusing for someone with a cognitive disability. The 57% is a great starting point, but it’s not the finish line.

AI Tool Comparison: ChatGPT vs Claude vs Gemini

Beyond dedicated scanning tools, general-purpose AI assistants like ChatGPT, Claude, and Gemini have become popular for quick accessibility questions. These large language models aren’t built specifically for accessibility testing, but they can act as helpful aids for developers and designers. Each has its own personality and strengths. Think of them less as automated testers and more as conversational partners that can help you think through a problem.

It’s important to set expectations. None of these tools can “audit” a live website. Instead, you provide them with code snippets, descriptions of user interfaces, or screenshots, and they give you feedback based on their training data. Their advice is a starting point for investigation, not a final verdict.

Image Alt Text Generation Accuracy

Generating good alt text, the descriptive text that screen readers announce for images, is a common task where people turn to AI. The results vary quite a bit.

ChatGPT is generally decent at writing descriptions for simple, straightforward images, like a photo of a product. However, it often struggles with more complex visuals, such as charts, graphs, or busy screenshots. It might generate overly detailed descriptions that aren’t helpful or miss the essential context that a screen reader user needs to understand why the image is important.

Claude often provides more thoughtful and ethically minded alt text. It’s better at considering the purpose of the image within the page. However, it can sometimes be more verbose and less direct, requiring a human to edit its suggestions down to be more concise for a better user experience.

Gemini, with its strong multimodal abilities, can be quite effective at analyzing images within the context of surrounding text and UI elements. Its connection to Google’s larger data ecosystem gives it an edge in understanding real-world objects and scenes, but its guidance on how to write alt text that is truly useful can sometimes be generic.

Code Review and ARIA Recommendations

Developers often paste code snippets into these AIs to get a quick check for accessibility problems.

ChatGPT can be a helpful starting point for explaining basic concepts. If you ask it about a specific WCAG rule or how to use an ARIA (Accessible Rich Internet Applications) attribute, it usually gives a clear, easy-to-understand answer. But be careful, it has been known to provide outdated code or suggest fixes that don’t fully solve the underlying issue. You always need to verify its advice with official WCAG documentation.

Claude excels at more detailed and systematic analysis. It’s particularly good at explaining the “why” behind an accessibility requirement, focusing on the human impact. It will often frame its recommendations around inclusion and user rights, which can be useful for team education. Its code suggestions are generally cautious and thorough.

Gemini’s advantage comes from its integration with Google’s accessibility tools. When giving advice, it can sometimes reference how a specific code implementation will behave with screen readers like TalkBack. This provides a more practical, real-world context that is often missing from purely theoretical advice.

Content Structure Analysis

A logical content structure is vital for screen reader users, who often navigate a page by jumping between headings.

When it comes to analyzing structure, most AI assistants can handle the basics. You can describe your heading outline to ChatGPT, and it will tell you if you’ve skipped a level (e.g., going from an H2 to an H4). It can spot obvious structural problems that would fail an automated check.

Claude is a bit more advanced in this area. It’s better at identifying potential issues related to cognitive load or confusing information architecture. It might point out that while your headings are technically correct, the flow of information could be confusing for users with attention differences or learning disabilities.

Gemini uses its ability to process visual layouts to its advantage here. If you provide a screenshot, it can compare the visual hierarchy of the page to the underlying code structure. This helps it spot situations where a page looks structured but is a mess for anyone who can’t see the screen.

AI Integration with Traditional Testing Tools

The biggest shift in accessibility testing isn’t just the rise of standalone AI chatbots. It’s how AI is being built directly into the professional-grade tools that developers and testers already use. Companies aren’t asking teams to add a separate “AI step” to their workflow. Instead, they are making existing tools smarter, faster, and more accurate. This is where the real efficiency gains are happening.

Axe + AI Enhancement Features

The axe-core rules engine, developed by Deque, is the foundation for many popular accessibility tools, including Google’s Lighthouse and Microsoft’s Accessibility Insights. When you hear about tools catching 57% of issues, it’s often powered by this engine. A key reason developers trust Axe is its “zero false positives” policy. Every issue it flags is a confirmed problem, so no time is wasted on wild goose chases.

Deque has taken this a step further with axe Assistant, an AI chatbot specifically for accessibility. Unlike general-purpose AIs, it’s trained on Deque’s extensive library of accessibility knowledge. This allows it to give highly accurate, context-specific answers to questions about code, design, or compliance. Furthermore, tools like axe DevTools integrate these checks directly into development pipelines, stopping accessibility bugs before they ever get merged into the main codebase.

WAVE AI-Powered Improvements

WAVE, from WebAIM, has been a go-to tool for years because of its visual approach. Instead of just generating a report, it injects icons and indicators directly onto your web page, showing you exactly where the problems are. This makes it incredibly effective for manual testing and for teaching people about accessibility.

While WAVE doesn’t market itself as an “AI tool” in the same way as others, its philosophy perfectly complements an AI-assisted workflow. Automated AI scans can find the low-hanging fruit quickly. Then, a tool like WAVE allows a human tester to efficiently review the page, investigate the issues AI has flagged, and use their own judgment to find problems AI missed. The combination of AI for speed and WAVE for human-centric evaluation creates a powerful and practical testing process.

Lighthouse AI Updates

Lighthouse is built into the Google Chrome browser, making it one of the most accessible testing tools available. Its big advantage is that it doesn’t just report on accessibility. It gives you a single report that also covers performance, SEO, and other best practices. This encourages teams to think about accessibility as part of the overall user experience, not a separate chore. Lighthouse can be automated and integrated into continuous integration (CI) pipelines. For example, you can set a rule to fail a build if the accessibility score drops below 95. This acts as a safety net, preventing regressions and ensuring a consistent standard of quality. However, it’s critical to remember that a perfect 100 on Lighthouse does not mean your site is perfectly accessible. It just means you’ve passed all the automated checks it knows how to run. The real work of manual testing begins after you get that 100.

Human Testing: What AI Still Misses in 2025

For all its advancements, AI is still just a machine following rules. It has no understanding of human experience. The most critical accessibility barriers are not simple code violations; they are breaks in logic, confusing interactions, and dead ends that leave users frustrated and unable to complete their tasks. This is where human testing remains irreplaceable. An experienced human tester, especially one who uses assistive technology, will find serious issues that every automated tool on the market will miss.

User Experience Context Issues

An AI can verify the presence of an accessibility feature, but it can’t judge its quality or usefulness. For example, it can check if an image has alt text, but it can’t tell you if that alt text is a helpful description (“A red 2025 Ford Mustang parked in front of a modern glass building”) or useless spam (“car auto automobile vehicle fast red shiny”).

Similarly, an AI can’t determine if link text makes sense out of context. A screen reader user might navigate a page by pulling up a list of all the links. If your page is full of links that just say “Click Here” or “Learn More,” that list is meaningless. An AI won’t flag this as an error, but a human tester will spot it immediately as a major usability problem. The tool checks the code; the human checks the experience.

Complex Interactive Element Testing

Modern web applications are full of dynamic and interactive components: pop-up modals, complex date pickers, drag-and-drop interfaces, and auto-updating content. These are often black holes for automated testers.

One of the most common and severe issues that AI misses is the “keyboard trap.” This happens when a keyboard user can tab into a component, like a newsletter signup pop-up, but cannot tab back out to the main page. They are literally stuck. An automated scan can’t simulate this user journey and will not detect the trap.

Testing these complex elements requires a person to try to use them with only a keyboard, or with a screen reader, and see what happens. Can they open the dropdown menu? Can they dismiss the modal? Is new content that appears on the screen announced by the screen reader? These are pass/fail tests for usability that AI simply cannot perform.

Screen Reader Compatibility Nuances

There is a world of difference between a site that is “technically compliant” and one that provides a good screen reader experience. An AI cannot simulate what it’s like to listen to a website.

A common problem is the reading order. A page might look perfectly logical to a sighted user, but if the underlying code structure (the DOM order) is a mess, a screen reader will read the content in a nonsensical sequence. It might read the footer before the main content, or jump from the first column to the third. An AI scan won’t catch this because it isn’t listening, it’s just reading the code.

Another nuance is the use of ARIA for dynamic updates. For example, when a user adds an item to a shopping cart, a message like “Item added!” should be announced to a screen reader user. Developers use specific ARIA attributes to make this happen. An AI might check if the attribute is there, but it can’t confirm if the announcement actually works as intended across different screen reader and browser combinations.

Cost Analysis: AI vs Human Testing by Business Size

The right accessibility testing strategy depends heavily on a company’s size, resources, and goals. There is no one-size-fits-all answer, and the cost-benefit analysis looks different for a small business compared to a large enterprise.

Hybrid Testing Approach: Maximizing ROI with Combined Methods

The debate over AI versus human testing presents a false choice. The most effective, mature, and cost-efficient accessibility strategies do not pick one over the other; they combine them. A hybrid approach uses AI and automation for what they do best, speed and scale, while relying on human expertise for what machines can’t do, understanding context and usability. This creates a system of checks and balances that catches the widest range of issues.

The process starts by “shifting left.” This means integrating automated AI-powered checks early in the development process. Tools like Axe can be plugged into a developer’s workflow, providing instant feedback and flagging simple bugs before they become bigger problems. This is the most cost-effective way to handle the basics.

Next, human expertise comes in for manual testing. This is essential for complex user flows like a checkout process, interactive features like a store locator map, and for the final pre-launch review. Human testers navigate with keyboards, use screen readers, and assess the overall experience.

Future Predictions: AI Testing Evolution Through 2026

The field of AI-powered accessibility testing is going to keep moving quickly. Looking ahead, we can expect a few key changes.

First, the accuracy of AI tools will continue to get better. Projections suggest that automated detection rates could approach 70% by the end of 2025, and they won’t stop there. This will be driven by better machine learning models that are trained on vast datasets of accessible and inaccessible code.

Second, expect even deeper integration into developer tools. Accessibility checks will become a standard, built-in feature of code editors, design programs, and deployment pipelines. The goal is to make checking for accessibility as normal as checking for spelling errors.

Finally, the focus of AI will likely shift from just finding problems to providing smarter and more context-aware solutions. Instead of just flagging an error, future AIs might offer several code-fix suggestions, complete with explanations of the pros and cons of each approach. As the web moves toward new standards like WCAG 3.0, which will focus more on actual user outcomes, our testing methods will need to evolve, and AI will be a central part of that evolution.

AI Accessibility Testing in 2025

However, this number doesn’t represent 57% of the barriers a person with a disability will actually encounter. AI, in its current form, doesn’t understand context, frustration, or logic. It can’t tell you if your “accessible” website is actually usable. So, while AI offers incredible speed and efficiency, it’s only one part of the story. The other part involves human experience, a factor that remains the most important piece of the accessibility puzzle.

Using Automated Tools for Quick Insights (Accessibility-Test.org Scanner)

Automated testing tools provide a fast way to identify many common accessibility issues. They can quickly scan your website and point out problems that might be difficult for people with disabilities to overcome.

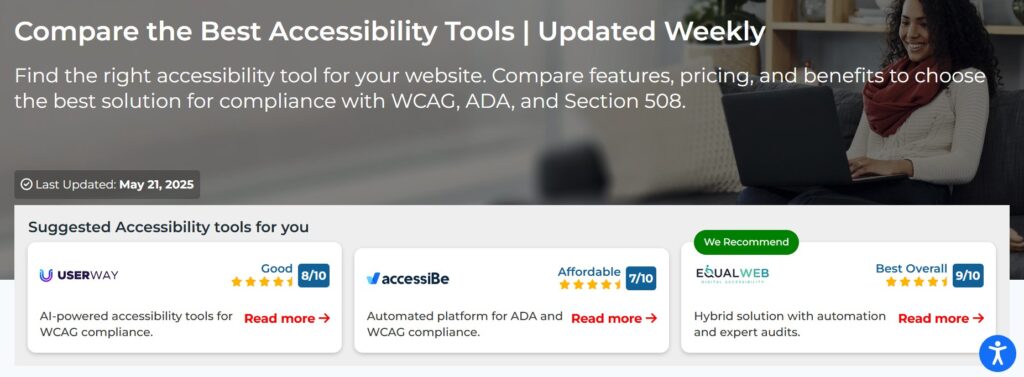

Visit Our Tools Comparison Page!

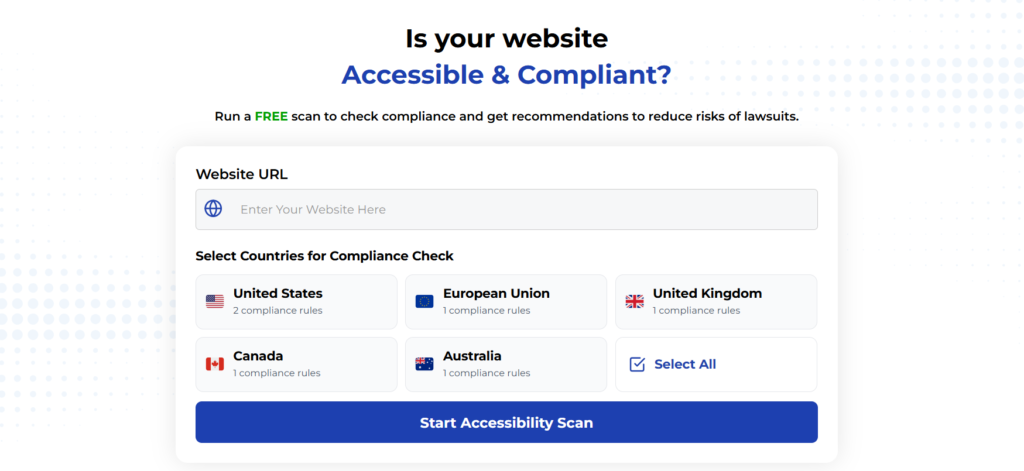

Run a FREE scan to check compliance and get recommendations to reduce risks of lawsuits

Final Thoughts

Finally, the gold standard is to include usability testing with people with disabilities. Nothing provides more valuable insight than watching someone who relies on assistive technology try to accomplish a task on your website. Their feedback moves you beyond simple compliance to creating an experience that is truly inclusive and works for everyone.

Start your compliance journey, update your accessibility statement, and keep EAA top of mind in every project going forward.

Run a Free Scan to Find E-commerce Accessibility Barriers

Want More Help?

Try our free website accessibility scanner to identify heading structure issues and other accessibility problems on your site. Our tool provides clear recommendations for fixes that can be implemented quickly.

Join our community of developers committed to accessibility. Share your experiences, ask questions, and learn from others who are working to make the web more accessible.